Prompt engineering: Difference between revisions

PauloPacheco (talk | contribs) (Created page with "Prompt engineering or Prompt design is the practice of discovering the prompt that gets the best result from the AI system. <ref name="”4”"></ref> The development of prompts requires human intuition with results that can look arbitrary. <ref name="”9”">Pavlichenko, N, Zhdanov, F and Ustalov, D (2022). Best prompts for text-to-image models and how to find them. arXiv:2209.11711v2</ref> Manual prompt engineering is laborious, it may be infeasible in some si...") |

(No difference)

|

Revision as of 14:41, 14 February 2023

Prompt engineering or Prompt design is the practice of discovering the prompt that gets the best result from the AI system. [1] The development of prompts requires human intuition with results that can look arbitrary. [2] Manual prompt engineering is laborious, it may be infeasible in some situations, and the prompt results may vary between various model versions. [3] However, there have been developments in automated prompt generation which rephrases the input, making it more model-friendly. [4]

A list of prompts for beginners is available as well as a compilation of the best prompt guides and tutorials.

Language models

In language models like GPT, the output quality is influenced by a combination of prompt design, sample data, and temperature (a parameter that controls the “creativity” of the responses). Furthermore, to properly design a prompt the user has to have a good understanding of the problem, good grammar skills, and produce many iterations. [5]

Therefore, to create a good prompt it’s necessary to be attentive to the following elements:

- The problem: the user needs to know clearly what he wants the generative model to do and its context. [5] [6] For example, the AI can change the writing style of the output ("write a professional but friendly email" or "write a formal executive summary.") [6]. Since the AI understands natural language, the user can think of the generative model as a human assistant. Therefore, thinking “how would I describe the problem to my assistant who hasn’t done this task before?” may provide some help in defining clearly the problem and context. [5]

- Grammar check: simple and clear terms. Avoid subtle meaning and complex sentences with predicates. Write short sentences with specifics at the end of the prompt. Different conversation styles can be achieved with the use of adjectives. [5]

- Sample data: the AI may need information to perform the task that is being asked of it. This can be a text for paraphrasing or a copy of a resume or LinkedIn profile, for example. [6] The data provided must be coherent with the prompt. [5]

- Temperature: a parameter that influences how “creative” the response will be. For creative work, the temperature should be high (e.g. .9) while for strict factual responses, a temperature of zero is better. [5]

- Test and iterate: test different combinations of the elements of the prompt. [5]

Besides this, a prompt can also have other elements such as the desired length of the response, the output format (GPT-3 can output various code languages, charts, and CSVs), and specific phrases that users have discovered that work well to achieve specific outcomes (e.g. “Let's think step by step,” “thinking backwards,” or “in the style of [famous person]”). [6]

Text-to-image generators

Some basic elements influence the quality of a text-to-image prompt. While these elements will work on different generator models, their impact on the final image quality may be different.

- Nouns: denotes the subject in a prompt. The generator will produce an image without a noun although not meaningful. [7]

- Adjectives: can be used to try to convey an emotion or be used more technically (e.g. beautiful, magnificent, colorful, massive). [7]

- Artist names: the art style of the chosen artist will be included in the image generation. There is also an unbundling technique (figures 4a and 4b) that proposes a “long description of a particular style of the artist’s various characteristics and components instead of just giving the artist names.” [7]

- Style: instead of using the style of artists, the prompt can include keywords related to certain styles like “surrealism,” “fantasy,” “contemporary,” “pixel art”, etc. [7]

- Computer graphics: keywords like “octane render,” “Unreal Engine,” or “Ray Tracing” can enhance the effectiveness and meaning of the artwork. [7]

- Quality: quality of the generated image (e.g. high, 4K, 8K). [7]

- Art platform names: these keywords are another way to include styles. For example, “trending on Behance, “Weta Digital”, or “trending on artstation.” [7]

- Art medium: there is a multitude of art mediums that can be chosen to modify the AI-generated image like “pencil art,” “chalk art,” “ink art,” “watercolor,” “wood,” and others. [7]

In-depth lists with modifier prompts can be found here and here.

Midjourney

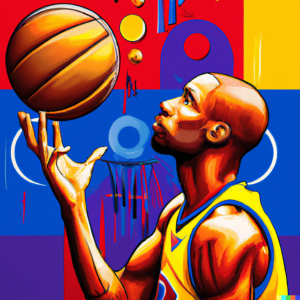

In Midjourney, a very descriptive text will result in a more vibrant and unique output. [8] Prompt engineering for this AI image generator follows the same basic elements as all others (figure 5) but some keywords and options will be provided here that are known to work well with this system.

- Style: standard, pixar movie style, anime style, cyber punk style, steam punk style, waterhouse style, bloodborne style, grunge style (figure 6). An artist’s name can also be used. [8]

- Rendering/lighting properties: volumetric lighting, octane render, softbox lighting, fairy lights, long exposure, cinematic lighting, glowing lights,and blue lighting (figure 7). [8]

- Style setting: adding the command –s <number> after the prompt will increase or decrease the stylize option (e.g. /imagine firefighters --s 6000). [8]

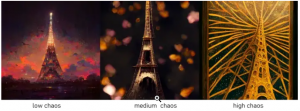

- Chaos: a setting to increase abstraction (figure 8) using the command /imagine prompt --chaos <a number from 0 to 100> (e.g. /imagine Eiffel tower --chaos 60). [8]

- Resolution: the resolution can be inserted in the prompt or using the standard commands --hd and --quality or --q <number>. [8]

- Aspect ratio: the default aspect ratio is 1:1. This can be modified with the comman --ar <number: number> (e.g. /imagine jasmine in the wild flower --ar 4:3). A custom size image can also be specified using the command --w <number> --h <number> after the prompt. [8]

- Images as prompts: Midjourney allows the user to use images to get outputs similar to the one used. This can be done by inserting a URL of the image in the prompt (e.g. /imagine http://www.imgur.com/Im3424.jpg box full of chocolates). Multiple images can be used. [8]

- Weight: increases or decreases the influence of a specific prompt keyword or image on the output. For text prompts, the command ::<number> should be used after the keywords according to their intended impact on the final image (e.g. /imagine wild animals tiger::2 zebra::4 lions::1.5). [8]

- Filter: to discard unwanted elements from appearing in the output use the --no <keyword> command (e.g./imagine KFC fried chicken --no sauce). [8]

DALL-E

For DALL-E, a tip is to write adjectives + nouns instead of verbs or complex scenes. To this, the user can add keywords like “gorgeous,” “amazing,” and “beautiful,” plus “digital painting,” “oil painting”, etc., and “unreal engine,” or “unity engine.” [9]

Other templates can be used that work well with this model:

- A photograph of X, 4k, detailed.

- Pixar style 3D render of X.

- Subdivision control mesh of X.

- Low-poly render of X; high resolution, 4k.

- A digital illustration of X, 4k, detailed, trending in artstation, fantasy vivid colors. [9]

Other user experiments can be accessed here. [9]

Stable Diffusion

Overall, prompt engineering in Stable Diffusion doesn’t differ from other AI image-generating models. However, it should be noted that it also allows prompt weighting and negative prompting. [10]

- Prompt weighting: varies between 1 and -1. Decimals can be used to reduce a prompt’s influence. [10]

- Negative prompting: in DreamStudo negative prompts can be added by using | <negative prompt>: -1.0 (e.g. | disfigured, ugly:-1.0, too many fingers:-1.0). [10]

Jasper Art

Jasper Art is similar to DALL-E 2 but results are different since Jasper gives priority to Natural Language Processing (NLP), being able to handle complex sentences with semantic articulation. [11]

There has been some experimentation with narrative prompts, an alternative to the combinations of keywords in a prompt, using instead more expressive descriptions. [11] For example, instead of using “tiny lion cub, 8k, kawaii, adorable eyes, pixar style, winter snowflakes, wind, dramatic lighting, pose, full body, adventure, fantasy, renderman, concept art, octane render, artgerm,” convert it to a sentence as if painting with words like, “Lion cub, small but mighty, with eyes that seem to pierce your soul. In a winter wonderland, he stands tall against the snow, wind ruffling his fur. He seems almost like a creature of legend, ready for an adventure. The lighting is dramatic and striking, and the render is breathtakingly beautiful.” [11]

References

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs named”4” - ↑ Pavlichenko, N, Zhdanov, F and Ustalov, D (2022). Best prompts for text-to-image models and how to find them. arXiv:2209.11711v2

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs named”3” - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs named”5” - ↑ 5.0 5.1 5.2 5.3 5.4 5.5 5.6 Shynkarenka, V (2020). Hacking Hacker News frontpage with GPT-3. Vasili Shynkarenka. https://vasilishynkarenka.com/gpt-3/

- ↑ 6.0 6.1 6.2 6.3 Robinson, R (2023). How to write an effective GPT-3 prompt. Zapier. https://zapier.com/blog/gpt-3-prompt/

- ↑ 7.0 7.1 7.2 7.3 7.4 7.5 7.6 7.7 Raj, G (2022). How to write good prompts for AI art generators: Prompt engineering made easy. Decentralized Creator. https://decentralizedcreator.com/write-good-prompts-for-ai-art-generators/

- ↑ 8.0 8.1 8.2 8.3 8.4 8.5 8.6 8.7 8.8 8.9 Nielsen, L (2022). An advanced guide to writing prompts for Midjourney ( text-to-image). Mlearning. https://medium.com/mlearning-ai/an-advanced-guide-to-writing-prompts-for-midjourney-text-to-image-aa12a1e33b6

- ↑ 9.0 9.1 9.2 Strikingloo (2022). Text to image art: Experiments and prompt guide for DALL-E Mini and other AI art models. Strikingloo. https://strikingloo.github.io/art-prompts

- ↑ 10.0 10.1 10.2 DreamStudio. Prompt guide. DreamStudio. https://beta.dreamstudio.ai/prompt-guide

- ↑ 11.0 11.1 11.2 The Jasper Whisperer (2022). Improve your AI text-to-image prompts with enhanced NLP. Bootcamp. https://bootcamp.uxdesign.cc/improve-your-ai-text-to-image-prompts-with-enhanced-nlp-fc804964747f