Segment Anything Model and Dataset (SAM and SA-1B)

- See also: Computer Vision Papers, Computer Vision Models and Computer Vision Datasets

| Paper | Website | Demo | Dataset | Blog | GitHub |

Introduction

Model Introduction

Segment Anything Model (SAM) is an artificial intelligence model developed by Meta AI. This model allows users to effortlessly "cut out" any object within an image using a single click. It is a promptable segmentation system that can generalize to unfamiliar objects and images without additional training.

Project Introduction

Segment Anything is a project aimed at democratizing image segmentation by providing a foundation model and dataset for the task. Image segmentation involves identifying which pixels in an image belong to a specific object and is a core component of computer vision. This technology has a wide range of applications, from analyzing scientific imagery to editing photos. However, creating accurate segmentation models for specific tasks often necessitates specialized work by technical experts, access to AI training infrastructure, and large amounts of carefully annotated data.

Segment Anything Model (SAM) and SA-1B Dataset

On April 5, 2023, the Segment Anything project introduced the Segment Anything Model (SAM) and the Segment Anything 1-Billion mask dataset (SA-1B), as detailed in a research paper. The SA-1B dataset is the largest-ever segmentation dataset, and its release aims to enable various applications and further research into foundation models for computer vision. The SA-1B dataset is available for research purposes, and the Segment Anything Model is released under an open license (Apache 2.0).

SAM is designed to reduce the need for task-specific modeling expertise, training compute, and custom data annotation in image segmentation. Its goal is to create a foundation model for image segmentation that can be trained on diverse data and adapt to specific tasks, similar to the prompting used in natural language processing models. However, segmentation data required for training such a model is not readily available, unlike images, videos, and text. Consequently, the Segment Anything project set out to develop a general, promptable segmentation model and simultaneously create a segmentation dataset on an unprecedented scale.

Segmentation Anything Model (SAM) Structure and Implementation

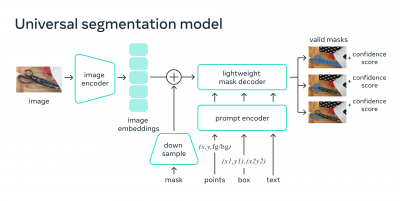

SAM's structure consists of three components:

- A ViT-H image encoder that runs once per image and outputs an image embedding.

- A prompt encoder that embeds input prompts such as clicks or boxes.

- A lightweight transformer-based mask decoder that predicts object masks from the image embedding and prompt embeddings.

The image encoder is implemented in PyTorch and requires a GPU for efficient inference. The prompt encoder and mask decoder can either run directly with PyTorch or be converted to ONNX and run efficiently on CPU or GPU across various platforms that support ONNX runtime.

The image encoder has 632 million parameters, while the prompt encoder and mask decoder together have 4 million parameters.

The image encoder takes approximately 0.15 seconds on an NVIDIA A100 GPU, while the prompt encoder and mask decoder take about 50 milliseconds on a CPU in a browser using multithreaded SIMD execution.

SAM was trained for 3-5 days on 256 A100 GPUs.

Segmentation Anything Model (SAM) Overview

Input Prompts

SAM utilizes a variety of input prompts to determine which object to segment in an image. These prompts enable the model to execute a wide range of segmentation tasks without further training. SAM can be prompted using interactive points and boxes, automatically segment all objects within an image, or generate multiple valid masks when given ambiguous prompts.

Integration with Other Systems

The promptable design of SAM allows for seamless integration with other systems. In the future, SAM could take input prompts from systems like AR/VR headsets to select objects based on a user's gaze. Additionally, bounding box prompts from object detectors can enable text-to-object segmentation.

Extensible Outputs

Output masks generated by SAM can be used as inputs to other AI systems. These masks can be employed for various purposes such as tracking objects in videos, facilitating image editing applications, lifting objects to 3D, or enabling creative tasks like collaging.

Zero-shot Generalization

SAM possesses a general understanding of what objects are, allowing it to achieve zero-shot generalization to unfamiliar objects and images without the need for supplementary training.

Background Information

Historically, there have been two main approaches to segmentation problems: interactive segmentation and automatic segmentation. Interactive segmentation enables the segmentation of any object class but requires human guidance, while automatic segmentation is specific to predetermined object categories and requires substantial amounts of manually annotated data, compute resources, and technical expertise. SAM is a generalization of these two approaches, capable of performing both interactive and automatic segmentation.

Promptable Segmentation

SAM is designed to return a valid segmentation mask for any prompt, whether it be foreground/background points, a rough box or mask, freeform text, or any other information indicating what to segment in an image. This model has been trained on the SA-1B dataset, which consists of over 1 billion masks, allowing it to generalize to new objects and images beyond its training data. As a result, practitioners no longer need to collect their own segmentation data and fine-tune a model for their use case.

Segmenting 1 Billion Masks: Building SA-1B Dataset

To train SAM, a massive and diverse dataset was needed. The SA-1B dataset was collected using the model itself; annotators used SAM to annotate images interactively, and the newly annotated data was then used to update SAM. This process was repeated multiple times to iteratively improve both the model and the dataset.

A data engine was built for creating the SA-1B dataset, which has three gears:

- model-assisted annotation,

- a mix of fully automatic annotation, and assisted annotation.

- fully automatic mask creation.

The final dataset includes more than 1.1 billion segmentation masks collected on about 11 million licensed and privacy-preserving images.

Potential Applications and Future Outlook

SAM has the potential to be used in a wide array of applications, such as AR/VR, content creation, scientific domains, and more general AI systems. Its promptable design enables flexible integration with other systems, and its composition allows it to be used in extensible ways, potentially accomplishing tasks unknown at the time of model design. In the future, SAM could be utilized in numerous domains that require finding and segmenting any object in any image, such as agricultural sectors, biological research, or even space exploration. Its ability to localize and track objects in videos could be beneficial for various scientific studies on Earth and beyond.

By sharing the research and dataset, the project aims to accelerate research into segmentation and more general image and video understanding. As a component in a larger system, SAM can perform segmentation tasks and contribute to the more comprehensive multimodal understanding of the world, for example, understanding both the visual and text content of a webpage.

Looking ahead, tighter coupling between understanding images at the pixel level and higher-level semantic understanding of visual content could lead to even more powerful AI systems. The Segment Anything project is a significant step forward in this direction, opening up possibilities for new applications and advancements in computer vision and AI research.

FAQs and Additional Information

SAM predicts object masks only and does not generate labels. It currently supports images or individual frames extracted from videos, but not videos directly.

The source code for SAM is available on GitHub for users interested in exploring and utilizing the model.