AgiBot X1

Last reviewed

Apr 26, 2026

Sources

17 citations

Review status

Source-backed

Revision

v4 · 4,244 words

Improve this article

Add missing citations, update stale details, or suggest a clearer explanation.

Last reviewed

Apr 26, 2026

Sources

17 citations

Review status

Source-backed

Revision

v4 · 4,244 words

Add missing citations, update stale details, or suggest a clearer explanation.

| AgiBot X1 | |

|---|---|

| |

| General information | |

| Manufacturer | AgiBot |

| Also known as | Lingxi X1 |

| Country of origin | China |

| Year introduced | 2024 |

| Status | Available |

| Price | ~US$19,500 (developer kit) |

| Website | agibot.com/products/X1 |

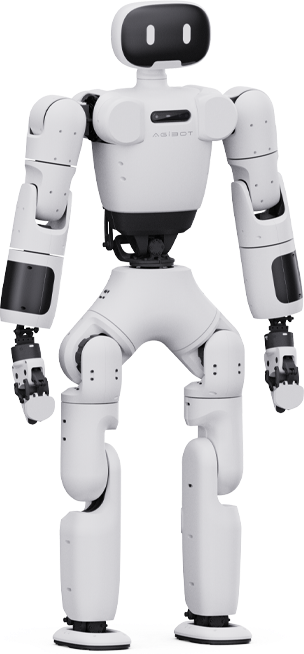

The AgiBot X1, also marketed as the Lingxi X1, is a full-stack, open-source bipedal humanoid robot developed by AgiBot (formally AgiBot Innovation (Shanghai) Technology Co., Ltd.). Unveiled on August 18, 2024, alongside four other models in the company's second-generation product lineup, the X1 was incubated by AgiBot's internal research division known as X-Lab. It was designed from the ground up as a modular, affordable research platform for university laboratories, robotics developers, and artificial intelligence researchers.

Standing 130 cm tall and weighing 33 kg, the X1 features 34 active degrees of freedom, proprietary PowerFlow servo actuators, and a dual-bus real-time control architecture combining EtherCAT and FDCAN communication. Unlike AgiBot's commercially oriented Yuanzheng A-series humanoids, the X1 prioritizes hackability and transparency: its complete hardware designs (CAD files, PCB schematics), control firmware, and middleware source code are published on GitHub. This open-source philosophy positions the X1 as one of the most accessible bipedal humanoid platforms available to the global research community.

The X1 is the first model in AgiBot's Lingxi X-series line of compact bipedal robots. Lessons learned from its development directly informed the design of the AgiBot X2 (Lingxi X2), which was unveiled in March 2025 with enhanced multimodal interaction, autonomous navigation, and more agile locomotion capabilities.

AgiBot was founded in February 2023 in Shanghai by Peng Zhihui and Deng Taihua, both former engineers at Huawei. Peng Zhihui, born in 1993, earned a master's degree in information and communication engineering from the University of Electronic Science and Technology of China (UESTC). Before his corporate career, he gained a large online following on the Chinese video platform Bilibili under the handle "Zhihuijun" (稚晖君), where he showcased ambitious DIY engineering projects including a self-balancing robot, a miniature television, a robotic arm capable of stitching grape skins (nicknamed "Dummy"), and a self-driving bicycle. These projects attracted millions of views and earned him the title of "Top 100 Uploaders of 2021" from Bilibili.

In 2020, Peng joined Huawei through its highly selective "Genius Youth" (天才少年) talent program, reportedly earning up to 3 million yuan per year. At Huawei, he worked as an AI algorithm engineer in the computing product line, contributing to the Ascend AI platform and focusing on edge heterogeneous computing. He left Huawei in December 2022 to co-found AgiBot with Deng Taihua, a former Huawei vice president who had led the company's 5G initiatives and the Ascend AI ecosystem.

The company's official name, Shanghai Zhiyuan Technology, reflects the Chinese branding, while the global trade name "AgiBot" combines "AGI" (artificial general intelligence) and "Bot" (robot). Within roughly two years of founding, AgiBot raised more than US$85 million across multiple funding rounds and attracted investors including BYD, Tencent, HongShan Capital (formerly Sequoia Capital China), Hillhouse Investment, and others. The company's valuation surpassed US$1 billion by early 2025.

AgiBot's first product was the Expedition A1 (also called RAISE A1), unveiled in August 2023, just six months after the company's founding. The A1 was a 175 cm tall, 53 kg bipedal humanoid with 49 degrees of freedom, aimed at industrial applications such as bolt tightening, vehicle inspections, and laboratory experiments. It introduced AgiBot's proprietary PowerFlow joint motor technology and the SkillHand dexterous hand.

By mid-2024, AgiBot had developed the Yuanzheng A2 series as its flagship commercial humanoid line and was preparing a broader portfolio to address different market segments. The company recognized that while its A-series robots served commercial and industrial customers, there was a significant need for an open, affordable platform that could democratize humanoid robot research. This gap became the motivation for the X1.

The AgiBot X1 was developed within AgiBot's X-Lab, an internal research and innovation division focused on open-source robotics and community-driven development. The X-Lab team designed the X1 to be a "full-stack open-source" platform, meaning that not only the software but also the hardware design files would be made freely available to the public.

On August 18, 2024, AgiBot co-founder Peng Zhihui hosted a product launch event where he unveiled five new robot models from two product families: the Yuanzheng (Expedition) series and the Lingxi series. The Lingxi X1 and Lingxi X1-W (a wheeled variant) were introduced as the company's dedicated research platforms, while the Yuanzheng A2, A2-W, and A2-Max targeted commercial deployment.

At the event, AgiBot announced that the Lingxi X1 would be fully open-sourced by September 2024. This pledge was fulfilled when AgiBot published the X1's complete design materials on GitHub, including hardware design schematics, software frameworks, the AimRT middleware source code, and locomotion control algorithms. The company also announced plans to open-source one million real-machine data points and tens of millions of simulation datasets generated by its AIDEA data system in the fourth quarter of 2024.

The open-source release included multiple GitHub repositories:

The AgiBot X1 stands 130 cm (4 feet 3 inches) tall and weighs 33 kg (73 lbs) with its battery installed. The chassis uses a lightweight aluminum-carbon composite construction that keeps the total mass low while maintaining structural rigidity sufficient for bipedal locomotion. The compact form factor was a deliberate design choice: smaller than the 175 cm A-series robots, the X1 is easier to transport, safer to operate in laboratory environments, and less expensive to manufacture.

The robot features a modular, "LEGO-style" architecture where every limb, servo, electronic board, and gripper can be independently replaced or upgraded without requiring recalibration of the entire system. This modularity extends to the mechanical assemblies, for which standardized STEP files and BOMs are provided, allowing researchers to fabricate replacement parts or custom modifications.

The X1 has 34 active degrees of freedom distributed across its body:

| Body Region | Degrees of Freedom | Notes |

|---|---|---|

| Base joints | 29 | Legs, arms, torso |

| Gripper units | 2 | OmniPicker adaptive grippers |

| Head articulation | 3 | Pan, tilt, and roll axes |

| Total | 34 |

This high joint count provides smooth, precise movements across the robot's full kinematic chain. The 29 base joints cover the hip, knee, ankle, shoulder, elbow, and wrist assemblies on both sides, plus waist articulation for trunk rotation and flexion.

The X1 is driven by AgiBot's proprietary PowerFlow R-series servo actuators, a family of quasi-direct-drive joint motors that use high-torque planetary reducers within a 10:1 gear ratio, dual position encoders, liquid cooling circulation, and a self-developed vector control (FOC) driver. The PowerFlow actuators are shared across AgiBot's entire product line, from the X1 through the A-series humanoids.

Three actuator models are used in the X1, each sized for its role in the kinematic chain:

| Actuator Model | Peak Torque | Peak Speed | Rated Torque | Rated Voltage | Primary Use |

|---|---|---|---|---|---|

| PowerFlow R86-3 | 200 N-m | 85 rpm | 60 N-m | 48 V | Hip and knee joints (high-load) |

| PowerFlow R86-2 | 80 N-m | 260 rpm | 20 N-m | 48 V | Shoulder and elbow joints (medium-load) |

| PowerFlow R52 | 19 N-m | 130 rpm | 6 N-m | 48 V | Wrist and head joints (low-load) |

The actuators feature integrated motor drivers with force feedback capabilities, enabling precise position and torque sensing necessary for stable bipedal locomotion and manipulation tasks. Each PowerFlow unit supports CAN bus connectivity and firmware-configurable settings, allowing researchers to tune motor behavior at the firmware level.

In addition to the rotary actuators, the X1 uses L28 linear actuators with a capacity of 110 N for specific joint configurations requiring linear rather than rotational motion.

The X1 is equipped with two OmniPicker adaptive grippers as its standard end effectors. Each gripper provides:

| Parameter | Value |

|---|---|

| Maximum clamping force | 30 N |

| Stroke | 120 mm |

| Communication protocols | CAN, RS485, Serial |

The single-arm payload capacity is 0.5 kg, suitable for light manipulation tasks such as grasping laboratory objects, picking up small tools, or interacting with tabletop items. While this payload is modest compared to industrial humanoids, it is sufficient for the X1's intended role as a research platform for reinforcement learning locomotion and basic manipulation experiments.

The X1 employs a dual-bus real-time control system that is one of its most distinctive technical features:

The system supports cascading of up to 16 decentralized Domain Controller Units (DCUs), each providing high-integrated power and communication management. The DCUs achieve microsecond-level timestamp alignment across the network, enabling a 1 kHz whole-body torque control loop that synchronizes movement across all 34 degrees of freedom simultaneously.

Additional communication interfaces include SPI, UART, GPIO, and USB-C for data transfer and diagnostics. A 24V / 5A auxiliary power rail is provided for custom sensor and peripheral integration.

| Control System Feature | Specification |

|---|---|

| Primary communication bus | EtherCAT |

| Secondary communication bus | FDCAN (5 Mbps) |

| Control loop frequency | 1 kHz |

| Maximum cascaded DCUs | 16 |

| Timestamp alignment | Microsecond-level |

| EtherCAT-to-FDCAN conversion | Supported by DCU |

The X1 uses an external PC running Ubuntu 22.04 with a real-time kernel as its primary compute platform. The x86-based host computer handles high-level perception, planning, and policy inference, while onboard microcontrollers manage low-level actuator control and sensor processing.

The software stack is built on AgiBot's open-source AimRT middleware framework, which serves as an alternative to ROS 2. AimRT is a lightweight C++20 framework with a codebase under 50,000 lines (compared to roughly 200,000 lines for ROS 2). It supports multiple communication protocols including ROS 2, HTTP, gRPC, MQTT, and Zenoh, and maintains full compatibility with the ROS 2 ecosystem through plugins. An AimRT node configured with a ROS 2 Humble plugin can function as a native ROS 2 node while benefiting from AimRT's performance improvements, which include up to 30% reduced latency in multi-node communication scenarios.

The X1 also supports Python and C++ development through the ROS 2 SDK, enabling researchers to write both deterministic low-level control code and high-level behavior scripts in their preferred language.

The X1's sensor suite includes:

The modular design includes documented expansion interfaces, allowing researchers to integrate custom sensors such as depth cameras, additional LiDAR units, or tactile sensor arrays without modifying the base platform.

The X1 operates on a rechargeable battery that provides approximately 2 hours of runtime under typical walking and manipulation workloads. The maximum walking speed is 1 m/s (3.6 km/h or 2.2 mph).

| Category | Parameter | Value |

|---|---|---|

| Physical | Height | 130 cm (4 ft 3 in) |

| Physical | Weight (with battery) | 33 kg (73 lbs) |

| Physical | Construction | Aluminum-carbon composite |

| Mobility | Total degrees of freedom | 34 active |

| Mobility | Maximum walking speed | 1 m/s (3.6 km/h) |

| Manipulation | Single-arm payload | 0.5 kg |

| Manipulation | Gripper clamping force | 30 N |

| Manipulation | Gripper stroke | 120 mm |

| Power | Battery runtime | ~2 hours |

| Computing | Primary OS | Ubuntu 22.04 (real-time kernel) |

| Computing | Architecture | x86 (external PC) |

| Computing | Middleware | AimRT (open-source) |

| Communication | Primary bus | EtherCAT |

| Communication | Secondary bus | FDCAN (5 Mbps) |

| Communication | Control loop rate | 1 kHz |

| Communication | Other interfaces | USB-C, SPI, UART, GPIO |

A central component of the X1's open-source release is its reinforcement learning training pipeline for bipedal locomotion. The training code, published in the agibot_x1_train GitHub repository, enables researchers to train walking policies in simulation and deploy them on the physical robot through sim-to-real transfer.

The training framework uses NVIDIA Isaac Gym Preview 4 as its primary simulation environment. Isaac Gym provides GPU-accelerated physics simulation that allows thousands of parallel robot instances to train simultaneously, dramatically reducing the wall-clock time needed to converge on effective locomotion policies. The required software stack includes PyTorch 1.13 with CUDA 11.7, Python 3.8, and NumPy 1.23.

The reinforcement learning pipeline follows a structured workflow:

train.py): Trains locomotion policies in the Isaac Gym environment, producing PyTorch .pt model checkpointsplay.py): Tests trained policies in simulation with visualization, supporting joystick control (Logitech F710) for interactive evaluation of movement commands including forward walking, lateral strafing, and in-place rotationexport_policy_dh.py): Converts trained policies to TorchScript JIT format for deploymentexport_onnx_dh.py): Converts policies to ONNX format for cross-platform inferenceThe framework supports sim-to-sim validation using MuJoCo, allowing reinforcement learning policies trained in Isaac Gym to be tested in an alternative physics simulator before deployment on the physical robot. This two-stage validation process helps identify policies that generalize well across different physics engines, increasing confidence in successful sim-to-real transfer.

The codebase builds upon established open-source RL frameworks, including:

Researchers can use the training code not only with the X1 but also import it for use with other robot models, making it a general-purpose tool for bipedal locomotion research.

The AgiBot X1 stands out in the humanoid robotics landscape for the depth and breadth of its open-source commitment. While many robot manufacturers provide SDK access or ROS 2 compatibility layers, the X1 offers what AgiBot calls "true transparency": full mechanical drawings, PCB schematics, firmware source code, and control algorithms are all publicly available.

This approach contrasts with competitors like the Unitree G1, which provides SDK and ROS 2 integration but does not open-source its hardware designs. Only the Unitree G1 EDU variant offers comparable SDK-level openness, but even that falls short of the X1's full-stack transparency.

| Feature | AgiBot X1 | Unitree G1 | Unitree G1 EDU |

|---|---|---|---|

| Hardware open-source | Full (CAD, PCB, BOM) | No | No |

| Software open-source | Full (firmware, middleware, RL code) | No | SDK + ROS 2 |

| Degrees of freedom | 34 | 23 | 23-43 |

| Payload capacity | 0.5 kg | 2 kg | 3 kg |

| Height | 130 cm | 132 cm | 132 cm |

The X1's open-source ecosystem extends beyond the robot itself. AgiBot has released several complementary resources:

The open-source strategy serves a dual purpose for AgiBot. It positions the company as a leader in the robotics research community, building goodwill and attracting talent. At the same time, it creates a developer ecosystem around AgiBot's technology stack (particularly AimRT and the PowerFlow actuators), which may drive adoption of the company's commercial products as researchers move from prototyping to deployment.

The AgiBot X1 is designed primarily for four market segments:

The X1 serves as a testbed for bipedal locomotion algorithms, reinforcement learning for embodied AI, human-robot interaction studies, and computer vision research. Its full-stack open-source nature allows researchers to modify any component of the system, from low-level motor controllers to high-level behavior planners, without reverse engineering.

University programs can use the X1 as a teaching platform, providing students with hands-on experience in bipedal control, sensor integration, and robot software development. The accessible codebase and comprehensive documentation lower the barrier to entry for students new to humanoid robotics.

The X1 functions as a physical testbed for simulation-to-real transfer experiments. Researchers training neural network policies in simulation can validate their approaches on real hardware, using the X1's reinforcement learning pipeline as a starting point. The platform's compatibility with the AgiBot World dataset enables multi-modal learning experiments combining real robot data with simulation.

Engineering teams can use the X1 to validate concepts before investing in custom hardware development. The modular design allows rapid reconfiguration for different experimental setups, and the well-documented interfaces simplify the integration of custom sensors, end effectors, or computing modules.

The AgiBot X1 developer kit is priced at approximately US$19,500 for the entry-level configuration. AgiBot does not publicly list fixed prices on its website; prospective buyers are directed to contact AgiBot's sales team for quotes, with educational and academic pricing options available.

The robot is available for purchase through AgiBot's official website and its online store (store.agibot.com). International shipping is supported. As of 2026, the X1 is in active production and commercially available, having moved beyond the prototype stage.

The AgiBot X1 was the first model in AgiBot's Lingxi X-series and served as the direct predecessor to the AgiBot X2 (Lingxi X2), which was unveiled on March 10, 2025. The X2 represents a significant evolution of the X-series concept, building on the X1's foundation while introducing capabilities for commercial service and entertainment applications.

| Feature | AgiBot X1 | AgiBot X2 | AgiBot X2 Ultra |

|---|---|---|---|

| Height | 130 cm | ~131 cm | ~131 cm |

| Weight | 33 kg | ~35 kg | ~39 kg |

| Degrees of freedom | 34 | 25 | 30 |

| Max walking speed | 1 m/s | 1.8 m/s | 1.8 m/s |

| Arm payload | 0.5 kg | 1 kg (full range); 3 kg (specific postures) | 1 kg (full range); 3 kg (specific postures) |

| Arm reach | N/A | 558 mm | 558 mm |

| Battery | ~2 hours | ~2 hours (at 0.5 m/s) | ~2 hours (at 0.5 m/s) |

| Battery capacity | N/A | ~500 Wh | ~500 Wh |

| Computing | External PC (x86) | RK3588 x2 | RK3588 x2 + Orin NX (157 TOPS) |

| Navigation | Manual/scripted | Autonomous with obstacle avoidance | Autonomous with obstacle avoidance |

| Sensors (head) | RGB camera, IMU | Interactive RGB camera, touch sensor | RGB camera, touch sensor, 3D LiDAR, stereo RGB, RGB-D |

| Multimodal interaction | None | Voice, visual, tactile, facial expression | Voice, visual, tactile, facial expression |

| Open-source | Full-stack | Developer SDK (AimDK_X2) | Developer SDK (AimDK_X2) |

| Auto-charging | No | No | Optional dock |

| Estimated price | ~US$19,500 | US$14,000-50,000 | US$14,000-50,000 |

While the X1 was designed as an open-source research platform, the X2 was engineered for commercial service and interactive roles. The X2 can walk, run, dance, ride a bicycle, and balance on a hoverboard, demonstrating a level of dynamic agility that exceeds the X1's capabilities. This agility comes from a bionic ankle design and liquid-smooth kinematics that were refined based on locomotion data and experience gathered during X1 development.

The X2 introduces multimodal perception and interaction, integrating visual, voice, tactile, and facial expression recognition for millisecond-response intelligent dialogue. It supports autonomous navigation with proactive obstacle avoidance and automatic energy replenishment, features that were absent from the X1's research-focused design.

Notably, the X2 has fewer degrees of freedom (25 in the base model, 30 in the Ultra) compared to the X1's 34. This reduction reflects a deliberate design trade-off: AgiBot optimized the X2's joint configuration for the specific tasks it needed to perform (walking, running, dancing, cycling, interactive service) rather than maximizing the total joint count. The X1's higher DOF count was appropriate for a research platform where flexibility and experimentation were paramount.

The transition from X1 to X2 also reflects a shift in the software paradigm. While the X1 provides raw, full-stack open-source access intended for researchers who want to build from scratch, the X2 offers a more structured developer experience through the AimDK_X2 software layer, which allows R&D teams to develop applications without rebuilding low-level control loops.

The X1 occupies a specific niche within AgiBot's broader product portfolio. While the Yuanzheng A-series (A2, A2-W, A2-Max, A3) targets industrial and commercial customers, and the Genie G-series (G1, G2) serves wheeled industrial applications, the X-series addresses the research and education market.

This segmentation allows AgiBot to build a developer ecosystem around its core technologies (PowerFlow actuators, AimRT middleware, reinforcement learning pipelines) while simultaneously pursuing revenue from commercial robot sales. Researchers who prototype on the X1 may later recommend or adopt AgiBot's commercial products when scaling from laboratory experiments to real-world deployments.

The X1 also plays a role in AgiBot's data strategy. The open-source release of the X1's training code, combined with the AgiBot World manipulation dataset and the AIDEA data system, creates a comprehensive ecosystem for embodied AI research. This ecosystem positions AgiBot alongside organizations like OpenAI and Google DeepMind in the broader effort to develop general-purpose robotic intelligence, while maintaining a distinctive focus on hardware accessibility and open-source collaboration.

Although not exclusive to the X1, AgiBot's data collection infrastructure is closely related to the X1's open-source mission. In September 2024, AgiBot opened the AIDEA Giga Data Factory, a 4,000+ square meter facility in Shanghai where nearly 100 robots are teleoperated to generate training data for embodied AI models. Human operators use handheld devices and virtual reality headsets to guide robots through tasks such as grabbing, holding, and placing objects across five simulated environments: home, retail, service, dining, and industrial settings. The facility generates 30,000 to 50,000 data points per day.

This data feeds into the AgiBot World Colosseo platform, which was released as AgiBot World Beta on March 1, 2025, containing over one million trajectories spanning 217 tasks and 87 skills, with a total duration of 2,976 hours. The dataset covers more than 100 real-world scenarios and involves over 3,000 different objects. AgiBot World was an IROS 2025 Best Paper Award Finalist and was published in IEEE Transactions on Robotics (TRO) in 2026.

The AgiBot World Challenge, co-hosted by AgiBot and OpenDriveLab at IROS 2025, drew 431 robotics teams from 23 countries competing across manipulation and world model tracks, with a total prize pool of US$560,000. This challenge further cemented the X1 ecosystem's role in advancing global robotics research.