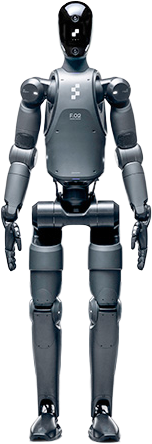

Figure 02

Last reviewed

May 17, 2026

Sources

No citations yet

Review status

Needs citations

Revision

v6 · 7,707 words

Improve this article

Add missing citations, update stale details, or suggest a clearer explanation.

Last reviewed

May 17, 2026

Sources

No citations yet

Review status

Needs citations

Revision

v6 · 7,707 words

Add missing citations, update stale details, or suggest a clearer explanation.

| |

| Developer | Figure AI |

| Type | Humanoid robot |

| Generation | 2nd |

| Unveiled | August 6, 2024 |

| Height | 168 cm (5 ft 6 in) |

| Weight | 70 kg (154 lb) |

| Degrees of Freedom | 41 (total), 16 per hand |

| Battery | 2,250 Wh; ~5 hours runtime |

| Walking Speed | 1.2 m/s (2.7 mph) |

| Payload | 20 kg (44 lb) total; 7 kg per arm |

| Cameras | 6 RGB cameras |

| AI System | Helix VLA (in-house) |

| Actuators | Electric (custom) |

| Price | ~$100,000 (estimated) |

Figure 02 (also written as F.02) is the second-generation humanoid robot developed by Figure AI, a robotics company headquartered in San Jose, California. Unveiled on August 6, 2024, Figure 02 succeeded the company's prototype Figure 01 and was designed from the outset for sustained commercial deployment in manufacturing and logistics environments. The robot became notable for completing an 11-month pilot program at BMW's Spartanburg, South Carolina plant, where it contributed to the production of more than 30,000 BMW X3 vehicles and logged over 1,250 hours of operational runtime on a live production line.

Figure 02 features 41 total degrees of freedom, fourth-generation dexterous hands with 16 degrees of freedom per hand, a 2,250 Wh battery providing approximately five hours of continuous operation, and six RGB cameras for perception. From February 2025 onward, the robot runs the Helix vision-language-action (VLA) model entirely onboard, requiring no cloud connection for inference. The robot marked Figure AI's transition from research prototype to commercially deployed product, and it served as the company's flagship platform until the introduction of Figure 03 in October 2025.

Key aspects of Figure 02 include its all-electric custom actuators, integrated cabling, torso-mounted battery, redesigned five-fingered hands with 16 degrees of freedom each, and an onboard compute stack reportedly using three NVIDIA RTX-class AI modules. The robot has been deployed under a Robot-as-a-Service (RaaS) model and produced at the company's BotQ manufacturing facility in California.

Figure AI was founded in 2022 by Brett Adcock, an entrepreneur who previously co-founded Archer Aviation (an electric vertical takeoff and landing aircraft manufacturer) and Vettery (a recruiting platform later acquired by Adecco). Adcock self-funded an initial $100 million seed round and recruited a founding team of engineers from Boston Dynamics, Tesla, Apple, Google DeepMind, and IHMC Robotics. The company set out to build a commercially viable general-purpose humanoid robot capable of performing tasks in unstructured environments where conventional fixed-base industrial robots cannot operate.

Figure AI was originally based in Sunnyvale, California, occupying a 27,900-square-foot facility. In early 2025 the company relocated its headquarters to a 98,700-square-foot industrial flex building at the Assembly at North First tech campus in San Jose, located at 3960 North First Street, nearly quadrupling its footprint. The new campus supports manufacturing, fleet operations, and engineering. Figure also leases additional research and development space at 3939 North First Street.

Figure AI's first robot, Figure 01, was introduced in March 2023. Standing 168 cm tall and weighing 60 kg, it demonstrated dynamic bipedal walking by October 2023 and completed autonomous tasks such as coffee preparation (after roughly 10 hours of demonstration training) by early 2024. Figure 01 served primarily as a research and development platform and relied on external cabling, a backpack-mounted battery, and limited onboard computing. Brett Adcock characterized Figure 01 as a "learner" robot, used to validate the company's approach to bipedal locomotion, vision-language reasoning, and dexterous manipulation. It was retired from frontline use in favor of Figure 02 and later models.

Figure AI has raised approximately $1.9 billion across multiple funding rounds, reaching a post-money valuation of $39 billion by September 2025.

| Round | Date | Amount | Valuation | Notable Investors |

|---|---|---|---|---|

| Seed | 2022 | ~$100 million | Undisclosed | Brett Adcock (personal investment) |

| Series A | May 2023 | $70 million | Undisclosed | Parkway Venture Capital, Aliya Capital, Bold Capital Partners, Tamarack Global, FJ Labs, Brett Adcock ($20M) |

| Series B | February 2024 | $675 million | $2.6 billion | Jeff Bezos (via Bezos Expeditions), Microsoft, NVIDIA, Intel Capital, OpenAI Startup Fund, ARK Invest, Parkway Venture Capital, Align Ventures |

| Series C | September 2025 | $1+ billion | $39 billion | Parkway Venture Capital (lead), Brookfield Asset Management, Intel Capital, Macquarie Capital, NVIDIA, Qualcomm Ventures, Salesforce, T-Mobile Ventures, LG Technology Ventures, Align Ventures, Tamarack Global |

The Series B round drew particular attention for its roster of high-profile investors. Jeff Bezos invested through Bezos Expeditions, while OpenAI participated through its Startup Fund. Series C, announced on September 16, 2025, represented a roughly 15-fold increase in valuation in 19 months. The proceeds were earmarked for accelerating manufacturing of Figure 03, expanding the BotQ facility, growing the AI data engine, and building reliability and safety infrastructure.

Figure 02 was unveiled on August 6, 2024, in a launch video published by Figure AI to accompany a press release distributed via PR Newswire. The company described Figure 02 as a "ground-up hardware and software redesign" of the Figure 01 platform. The development was informed by data collected during Figure 01's deployments, particularly the early-stage trial at BMW's Spartanburg facility where Figure 01 had been used for training and data-collection purposes earlier in 2024.

Key design goals for Figure 02 included increasing onboard computing power, extending battery life, improving hand dexterity, integrating cabling within the limbs, lowering the center of gravity, and enabling fully autonomous operation without human teleoperation during deployment. The robot was positioned as Figure AI's first product intended for sustained commercial use rather than for demonstration purposes alone.

At launch, Figure 02 incorporated OpenAI technology for speech-to-speech conversation. The integration gave the robot the ability to understand spoken commands and respond verbally while performing tasks. Figure AI demonstrated this capability in launch videos showing the robot identifying objects, answering questions about its surroundings, and discussing its actions in real time. The OpenAI collaboration was discontinued in February 2025 (see AI software section below).

Industry coverage in IEEE Spectrum, The Robot Report, NVIDIA's company blog, IoT World Today, and Interesting Engineering described Figure 02 as a substantial generational advance over Figure 01, citing the integrated cabling, redesigned hands, larger battery, and tripled onboard compute as standout improvements. Brett Adcock publicly described Figure 02 as the "most advanced humanoid on the planet," framing the launch as the company's transition from research prototype to product organization.

Figure 02 stands 168 cm (5 feet 6 inches) tall and weighs 70 kg (154 pounds), roughly matching the dimensions of an average adult human. The frame is constructed from high-strength composites and lightweight metal alloys. Joints use miniature ball bearings to combine structural rigidity with smooth articulation. Unlike Figure 01, which used external cabling routed along the outside of the limbs, Figure 02 integrates all power and signal cabling within the limbs and houses the battery in the torso. This redesign improves both balance and durability, removes snag points, and gives the robot a smoother visual silhouette.

Figure 02's range of motion was tuned to approximate human-equivalent reach. Joint torque ratings reach up to 150 Nm at the largest actuators, with a stated maximum range of motion of up to 195 degrees at certain joints, enabling the robot to bend, reach overhead, squat, and rotate at the torso.

The robot has 41 total degrees of freedom distributed across its body, allowing movement patterns that approximate the human range of motion. The five-fingered hands account for 16 DOF each (32 DOF combined), with the remaining nine degrees of freedom distributed across the legs, torso, neck, and head. This configuration enables the robot to walk, bend, reach, and manipulate objects across a wide workspace. Some Figure AI sources cite a baseline figure of 35 DOF when excluding finer joints in the head; the company's most-quoted total is 41.

Figure AI designs and builds its own electric actuators in-house, with three primary classes distributed across the body. All share a common architecture of harmonic-style reduction drives, integrated motor controllers, and proprietary control firmware, and were engineered for high cycle counts under continuous industrial duty.

| Class | Peak torque | Range of motion | Typical location |

|---|---|---|---|

| A2 | ~50 Nm | 48 degrees | Wrist and small distal joints |

| L1 | ~150 Nm | 195 degrees | Shoulder, hip, large-load joints |

| L4 | ~150 Nm | 135 degrees | Knee, elbow, torso pitch |

Figure 02 inherited Figure 01's actuator philosophy but moved most signal and power conductors inside the structural shell of each limb, reducing exposed cable runs that had become a wear point on the prior generation.

| Parameter | Value |

|---|---|

| Height | 168 cm (5 ft 6 in) |

| Weight | 70 kg (154 lb) |

| Total degrees of freedom | 41 |

| Hand degrees of freedom | 16 per hand |

| Fingers per hand | 5 |

| Total payload capacity | 20 kg (44 lb) |

| Arm payload | 7 kg per arm |

| Hand carry capacity | Up to 25 kg (55 lb) |

| Maximum walking speed | 1.2 m/s (2.7 mph) |

| Battery capacity | 2,250 Wh |

| Battery life | ~5 hours |

| Cameras | 6 RGB cameras |

| Onboard compute | 3 NVIDIA RTX-class AI modules |

| Inference uplift over Figure 01 | ~3x |

| Actuator type | Electric (custom) |

| Stair climbing | Yes |

| Connectivity | WiFi, 5G |

| Depth sensors | Yes |

| Force/torque sensors | Yes (joint level) |

| IMU | Yes |

| Speakers and microphones | Integrated (for speech I/O) |

| Estimated unit cost | ~$100,000 (industry estimate) |

The hands on Figure 02 represent the fourth generation of Figure AI's hand design. Each hand has five fingers with 16 degrees of freedom and human-equivalent grip strength. The joint system combines revolute joints for the finger phalanges with more complex articulations at the thumb base, supported by miniature ball bearings to achieve the range of motion and precision needed for fine manipulation. Rubberized grip pads on the fingertips provide friction without sacrificing tactile fidelity.

The hands can carry up to 25 kg (55 lb) per hand, adapt grip strength and finger positioning in real time at 200 Hz, and handle objects they have never previously encountered. During testing, the hands successfully manipulated delicate glassware, crumpled clothing, scattered small items, and standard industrial fixtures without requiring pre-programmed grasp configurations for each object type. Figure AI's hand actuator strategy uses cable-driven mechanisms with motors situated proximally in the forearm, with tendon routing through pulleys to drive distal finger joints.

Figure 02 carries a 2,250 Wh (approximately 2.25 kWh) battery integrated into the torso, compared to a backpack-mounted battery on Figure 01. Torso placement lowers the robot's center of gravity, improving balance and agility during locomotion. The battery provides approximately five hours of continuous operation under typical industrial workloads, a 50 percent increase over Figure 01's effective runtime. The pack is one of the largest single components of the robot's mass and was designed in-house, with thermal management systems integrated into the chassis.

Six RGB cameras provide visual coverage around the robot, enabling near-360-degree perception. Two cameras are mounted in the head for forward-facing stereo vision, with the remaining four positioned to cover lateral and rear approaches. The sensor suite also includes depth cameras, force/torque sensors at key joints, joint encoders, an inertial measurement unit (IMU) for body orientation, and joint-level current sensing that allows estimation of contact forces. This multi-sensor configuration feeds data into the robot's onboard AI system for real-time environmental understanding and task planning. Microphones and speakers in the head support spoken interaction.

Figure 02 walks on two legs at speeds up to 1.2 m/s (about 2.7 mph), traverses standard industrial flooring including ramps and grating, and can negotiate stairs. The walking gait is generated by a combination of model-based predictive controllers and learned policies, with the torso-integrated battery acting as ballast that lowers the center of mass and stabilizes the inverted-pendulum dynamics that govern bipedal walking. The robot can stand, squat, lean, and reach across a wide workspace without losing balance, and it uses its arms for postural recovery during disturbances.

Figure 02 supports both Wi-Fi and 5G cellular connectivity for fleet management, telemetry uplink, software updates, and remote diagnostics. All real-time control loops run locally on the robot, but cloud connectivity is used to upload teleoperation traces, sensor logs, and operational metrics to Figure AI's data infrastructure. The connectivity also enables remote troubleshooting of fielded units.

In February 2024, alongside its Series B funding announcement, Figure AI entered a collaboration agreement with OpenAI to develop AI models for humanoid robots. The partnership aimed to combine OpenAI's large language model research with Figure's robotics hardware and software expertise. Under this collaboration, Figure 02 gained speech-to-speech conversation capabilities, allowing operators and bystanders to communicate with the robot through natural language. The robot used onboard microphones and speakers to process spoken instructions and respond verbally, with OpenAI providing the multimodal language backbone.

The partnership lasted less than a year. On February 4, 2025, CEO Brett Adcock announced on X (formerly Twitter) that Figure AI was ending the collaboration. Adcock stated that Figure had achieved a "major breakthrough on fully end-to-end robot AI, built entirely in-house," making the external partnership unnecessary. He told TechCrunch: "We found that to solve embodied AI at scale in the real world, you have to vertically integrate robot AI." Adcock also cited practical difficulties, including challenges getting the OpenAI team to participate in joint development sessions and concerns when OpenAI indicated it wanted to pursue humanoid capabilities of its own.

The transition was abrupt. Adcock promised that Figure would unveil "something no one has ever seen on a humanoid" within 30 days. That promise was fulfilled when the company introduced its in-house Helix model on February 20, 2025.

Following the OpenAI breakup, Figure AI introduced Helix, a proprietary vision-language-action model that serves as the primary AI system for Figure 02 and subsequent robots. Helix uses a dual-system architecture inspired by Daniel Kahneman's distinction between fast and slow thinking, sometimes labeled "System 1, System 2" in cognitive science:

System 2 (slow thinking): An onboard internet-pretrained vision-language model (VLM) with approximately 7 billion parameters operates at 7 to 9 Hz. It handles high-level scene understanding, language comprehension, and task planning. The model processes monocular robot images and proprioceptive state data (including wrist pose and finger positions) and outputs a continuous latent vector that conditions lower-level actions.

System 1 (fast acting): A reactive visuomotor policy with approximately 80 million parameters translates System 2's semantic understanding into precise motor actions at 200 Hz. It uses a fully convolutional, multi-scale vision backbone initialized from simulation pretraining and a cross-attention encoder-decoder transformer architecture. The latent vector from System 2 is projected into System 1's token space and concatenated with visual features along the sequence dimension. This subsystem controls the full action space for the upper body.

System 2 operates asynchronously as a background process while System 1 maintains a critical 200 Hz real-time control loop. This split allows the robot to think and react at different timescales simultaneously and decouples deliberate planning from reactive control.

Helix was trained on approximately 500 hours of diverse teleoperated behavior data, with auto-labeling VLMs generating natural language instruction pairs for each behavior segment in a hindsight relabeling scheme. The model uses a standard regression loss and requires no task-specific fine-tuning. A single set of neural network weights handles picking, placing, drawer and refrigerator operation, and cross-robot interaction.

Figure AI has claimed several firsts for Helix: it was the first VLA to output high-rate continuous control of the entire humanoid upper body (including wrists, torso, head, and individual fingers); the first VLA to operate simultaneously on two robots solving a shared long-horizon manipulation task with previously unseen items; and the first VLA to run entirely onboard embedded low-power GPUs without requiring a cloud connection.

Figure 02 carries three NVIDIA RTX-class AI modules onboard, providing approximately three times the computing and AI inference capability of Figure 01. The dual-GPU configuration for Helix splits processing between the two systems: one GPU handles the slower System 2 VLM inference, while another manages the high-frequency System 1 motor control. A third compute resource is reserved for vision preprocessing and sensor fusion. All inference runs locally on the robot with no dependency on external servers or cloud infrastructure for control. This onboard-only design means the robot can continue working even when network connectivity is interrupted and avoids the latency and privacy concerns associated with cloud-based humanoid control.

In the months after Helix's debut, Figure AI continued to publish updates demonstrating scaled training data, longer-horizon behaviors, and full-body autonomous control. Subsequent posts on the Figure AI news blog described "Helix 02" with extended training sets exceeding 1,000 hours of human motion data and a learned whole-body controller termed "System 0," used to coordinate locomotion alongside upper-body manipulation. Figure 02 received over-the-air software updates that brought new Helix capabilities to fielded units.

In early 2026, Figure AI introduced Helix 02 as a major revision of the underlying VLA stack. The release added a new foundation layer beneath System 1 and System 2, formally designated System 0, which runs the lowest-level whole-body control loop at 1 kHz. System 0 is a learned prior over how a humanoid balances, makes contact, and transfers load between feet, intended to act as a stable substrate for the higher policy layers to build on. The complete stack therefore spans three timescales:

| Layer | Frequency | Function |

|---|---|---|

| System 2 (slow reasoning) | 7 to 9 Hz | Internet-pretrained vision-language model handling scene understanding, language, and goal sequencing |

| System 1 (fast control) | 200 Hz | Reactive visuomotor policy emitting full-body joint targets for legs, torso, head, arms, wrists, and fingers |

| System 0 (whole-body control) | 1 kHz | Learned balance and contact controller maintaining stability across the whole kinematic chain |

The Helix 02 release also exposed a new tactile sensing pathway and expanded camera inputs for robots that supported them, including head cameras, palm cameras, and fingertip force sensors on later Figure 03 hardware. Helix 02 ran on Figure 02 robots through over-the-air updates, though some capabilities tied to palm cameras and fingertip tactile sensing remained exclusive to Figure 03 hardware. On Figure 02 the upgrade primarily improved manipulation stability, locomotion-manipulation coordination, and the length of horizon over which the robot could perform autonomous tasks.

By mid-2026 Figure AI was publishing increasingly long autonomous demonstrations powered by Helix and Helix 02 on Figure 02 hardware:

| Date | Milestone |

|---|---|

| February 2025 | First Helix demo: two Figure 02 robots collaboratively put away groceries with no task-specific programming |

| February 2025 | Logistics package handling teaser showing rapid, dexterous sorting of mixed parcels |

| August 2025 | Scaled Helix logistics policy hitting 4.05 seconds per package and 95 percent correct barcode orientation |

| January 2026 | Four-minute end-to-end dishwasher unload and reload demonstration, 61 consecutive loco-manipulation actions with no human resets |

| May 2026 | Public demonstration of a full eight-hour autonomous shift run by Helix 02 on Figure hardware |

The eight-hour shift milestone was significant because it matched the duration of a standard industrial workday, addressing a long-standing skepticism that humanoid robots could only operate for short windows before requiring human resets or recharging.

The most significant real-world deployment of Figure 02 took place at the BMW Group Plant Spartanburg in South Carolina, one of BMW's largest manufacturing facilities globally and the source of every BMW X3, X4, X5, X6, X7, and XM crossover sold worldwide. The partnership between Figure AI and BMW was announced on January 18, 2024 in a joint press release, with the agreement initially involving Figure 01 for data collection and feasibility assessment in the early phase. Figure 02 was subsequently deployed on an active production line, where it operated for 11 months. BMW Manufacturing CEO Robert Engelhorn described the project as part of the plant's broader effort to integrate emerging robotics into automotive assembly.

Figure 02 worked 10-hour shifts Monday through Friday on the Spartanburg assembly line. The robot's primary task was sheet-metal loading: lifting parts from racks, transporting them across a short staging area, and placing them onto welding fixtures with a 5-millimeter tolerance in approximately 2 seconds per placement. After Figure 02 placed each part, conventional six-axis robotic arms performed the welding in standard automotive body-in-white fashion. The deployment had strict key performance indicators (KPIs):

| Metric | Target / Result |

|---|---|

| Total deployment duration | 11 months |

| Shift length | 10 hours (Monday through Friday) |

| Total runtime | 1,250+ hours |

| Parts handled | 90,000+ sheet-metal parts |

| Vehicles contributed to | 30,000+ BMW X3 |

| Cycle time | 84 seconds total (37 seconds for loading) |

| Placement tolerance | 5 mm |

| Placement accuracy target | >99% per shift |

| Human intervention target | Zero per shift |

| Distance traveled | ~200+ miles (~1.2 million robot steps) |

| Year of operation | August 2024 to mid-2025 |

The specific task assigned to Figure 02 was a body-in-white sheet-metal loading operation, a job widely regarded as ergonomically challenging for human workers due to the repetitive lifting, awkward reaching postures, and the precision required to seat parts in their welding jigs. Figure 02 picked parts from inbound bins, oriented them using a combination of vision and force feedback, and placed them onto a welding fixture where automated welding cells took over. The 84-second cycle was tightly synchronized with the broader assembly line, and the robots had to consistently meet the 5-millimeter placement tolerance to avoid disrupting downstream operations.

Public reporting on the Spartanburg pilot characterized it as an explicit learning exercise rather than a fixed deployment. Early in the program, success rates on the body-in-white loading task hovered near 25 percent per cycle, with frequent interventions to reset misaligned parts. Over the 11 months, iterative software updates to the Helix policy stack, hardware tweaks, and tooling adjustments lifted single-cycle success rates to near-perfect levels. Figure AI reported roughly 400 percent improvements in cycle speed and a sevenfold improvement in success rate between the program's start and its conclusion. By the tenth month, the robots were running complete 10-hour shifts without human supervision on the loading station. Third-party industry estimates put the resulting cycle-time reduction on the affected segment at 20 to 30 percent and the OEE (overall equipment effectiveness) improvement at around 15 percent, although BMW did not formally publish those numbers.

The deployment generated valuable data for Figure AI's engineering team. Accuracy stayed above 99 percent, the robots completed cycles within the required timeframes, and human intervention was minimal. However, the 1,250-plus hours of operation also revealed hardware weaknesses. The forearm emerged as the top hardware failure point, due to its tight packaging, three-degree-of-freedom dexterity requirements, and thermal constraints. Cabling routed through the wrist also showed wear over many actuation cycles. These findings directly informed the design of Figure 03, where engineers redesigned the wrist electronics to eliminate distribution boards and dynamic cabling, enabling direct motor controller communication with the main computer.

Figure AI also gathered substantial training data during the BMW deployment. Each pick-and-place cycle generated multiple modalities of sensor data (vision, force, joint positions, IMU readings) that were uploaded to Figure's cloud infrastructure for use in training successive iterations of Helix and informing improvements to the robot's locomotion and manipulation policies.

A second lesson concerned manufacturability. Figure 02 was largely produced through CNC machining, a process well suited to small build volumes but poorly matched to the cost and throughput targets needed for fleet deployment. Figure AI publicly cited the Spartanburg experience as the empirical basis for the manufacturing pivot that produced the BotQ facility and the tooled-process design of Figure 03.

In November 2025, Figure AI publicly retired the Figure 02 units deployed at Spartanburg. Brett Adcock posted images and video of the retired robots, characterizing them as "battle-scarred" and showing visible scuffs, scratches, paint wear, and contact marks consistent with extended industrial duty. BGR, Interesting Engineering, and Humanoids Daily covered the retirement as a milestone demonstrating that production-grade humanoid hardware could survive nearly a year of continuous shift work on a live automotive line. The retired robots were preserved as engineering artifacts, with their sensor logs and wear patterns feeding into Figure 03 design reviews. The retirement also marked the transition of Figure AI's flagship platform from Figure 02 to Figure 03.

Following the Spartanburg pilot, BMW announced in February 2026 that it would expand humanoid robot deployment to its Leipzig, Germany plant, marking the first use of humanoid robots in European automotive production. BMW also unveiled a new "Center of Competence for Physical AI in Production," formalizing humanoid integration as part of its long-term automation strategy. The Leipzig pilot uses AEON robots from Hexagon Robotics rather than Figure units, suggesting BMW is evaluating multiple humanoid platforms in parallel as the technology matures.

In late December 2024, Figure AI announced that it had shipped Figure 02 robots to its first paying commercial customer, making it a revenue-generating company. Brett Adcock posted on X: "Today, Figure officially became a revenue-generating company! This week, we delivered F.02 humanoid robots to our commercial client, & they're currently hard at work." The milestone was reached 31 months after the company filed its C-Corp. The specific identity of this customer was not publicly disclosed, although the deployment was described as taking place in an industrial environment. BMW's Spartanburg trial was a separately structured pilot rather than the December 2024 paying customer.

Figure AI has adopted a Robot-as-a-Service (RaaS) business model for commercial deployments. Industry estimates have placed the subscription price at approximately $1,000 per robot per month (roughly $12,000 per year) for early customers, although other reports cite higher monthly figures depending on the scope of services bundled. The subscription approach typically covers hardware deployment, software updates over the air, preventive and reactive maintenance, fleet management, and on-site support. The RaaS model lowers capital expenditure for customers, reduces hardware obsolescence risk, and provides Figure AI with predictable recurring revenue.

Third-party analyses have estimated that the total bill of materials for Figure 02 falls in the range of tens of thousands of dollars, with the all-in cost including custom actuators, batteries, GPUs, and structural components placing it well above conventional industrial robots. Public commentary from Brett Adcock and Figure AI has consistently framed price reduction as a primary objective, with Figure 03 targeting a roughly 90 percent component cost reduction relative to Figure 02 through tooled manufacturing processes and supply-chain consolidation.

In March 2025, Figure AI unveiled BotQ, a vertically integrated manufacturing facility in California designed for high-volume humanoid robot production. The first-generation manufacturing line is capable of producing up to 12,000 humanoid robots per year, with a stated goal of manufacturing 100,000 robots over a four-year period.

BotQ represents a shift from prototyping methods to production-oriented manufacturing. Components that previously required over a week of CNC machining now take under 20 seconds using steel molds, injection molding, die-casting, metal injection molding, and stamping. The facility features automated grease dispensing for motor gearboxes and robotic cell testing for battery components. Final assembly is performed by a combination of human technicians and Figure 02 robots themselves.

One distinctive aspect of BotQ is the integration of Figure's own humanoid robots into the assembly process. Using the Helix AI system, Figure robots assist with assembling key production line components and handle material movement between stations, replacing conventional conveyor systems. The company built custom Manufacturing Execution Software (MES) along with PLM, ERP, and WMS systems over a six-month development period to support scalable manufacturing.

By late 2025 and early 2026, the BotQ facility had ramped Figure 03 production from one robot per day to one robot per hour, a 24-fold throughput improvement, signaling that the manufacturing approach pioneered for Figure 02 was scaling effectively for the next-generation platform.

Figure 02 represented a substantial upgrade over the Figure 01 prototype across nearly every dimension.

| Feature | Figure 01 | Figure 02 |

|---|---|---|

| Unveiled | March 2023 | August 2024 |

| Weight | 60 kg | 70 kg |

| Degrees of freedom | 41 | 41 |

| Hand DOF | Basic grippers | 16 per hand (4th gen) |

| Battery capacity | ~1,500 Wh | 2,250 Wh (+50%) |

| Battery location | Backpack-mounted | Torso-integrated |

| Onboard compute | Limited | 3 NVIDIA RTX-class AI modules (3x increase) |

| AI system | OpenAI integration | Helix VLA (in-house) |

| Cabling | External | Integrated within limbs |

| Cameras | Limited | 6 RGB cameras (near-360 coverage) |

| Autonomy | Heavy reliance on teleoperation | End-to-end neural network autonomy |

| Commercial status | Research prototype | Commercially deployed |

| Stair climbing | Limited | Yes |

| Connectivity | Wi-Fi | Wi-Fi, 5G |

The most significant improvements were the threefold increase in onboard computing power, the 50 percent larger battery with torso integration, the fourth-generation dexterous hands, the shift from external cabling to integrated wiring, and the transition from reliance on external AI partners to a fully in-house AI stack.

In October 2025, Figure AI introduced Figure 03, the third-generation humanoid designed for both home and factory environments. Figure 03 incorporates lessons from the Figure 02 BMW deployment and is the first Figure robot engineered from the ground up for high-volume manufacturing at BotQ.

Key differences from Figure 02 include: 9 percent less mass (61 kg vs 70 kg) with significantly less volume; soft textile coverings instead of hard machined parts to reduce injury risk during home use; tactile fingertip sensors detecting forces as small as 3 grams; a redesigned camera system with double the frame rate, one-quarter the latency, and 60 percent wider per-camera field of view; hand-mounted palm cameras (eight cameras total instead of six); wireless inductive charging at 2 kW through coils in the feet; 10 Gbps mmWave data offload; and actuators with two-times faster speed and improved torque density.

Figure AI also reported a roughly 90 percent component cost reduction for Figure 03 relative to Figure 02, achieved through tooled manufacturing processes such as injection molding and die-casting, in-house actuator production, and supply-chain consolidation. Despite the differences, Figure 02 remained Figure AI's most-deployed unit during 2025 and continued to receive Helix software updates over the air.

Figure 02 entered a rapidly growing humanoid robotics market with several well-funded competitors. The table below summarizes the leading systems as of early 2026.

| Company | Robot | Key Features | Status (as of early 2026) |

|---|---|---|---|

| Figure AI | Figure 02 / Figure 03 | Helix VLA, BMW deployment, BotQ factory | Commercial (limited deployment), $39B valuation |

| Tesla | Optimus | Target price $20,000-$30,000, Tesla factory use | Prototyping; Optimus Gen 3 mass production at Fremont began January 2026 |

| Boston Dynamics | Atlas (electric) | 56 DOF, 50 kg lift, Hyundai backing | Commercial launch at CES 2026 |

| Apptronik | Apollo | 25 kg payload, Mercedes-Benz pilots | Commercial pilots |

| Sanctuary AI | Phoenix | Hydraulic actuators, hand-focused | Commercial pilots |

| Agility Robotics | Digit | Bipedal, logistics-focused | $2.1B valuation, Amazon and GXO pilots |

| 1X Technologies | NEO | Lightweight, home-oriented, tendon-driven | Beta deployments to homes |

| Unitree Robotics | H1 / H2 | Low cost, open ecosystem | Commercial |

| Xpeng | Iron | Chinese consumer-facing humanoid | Demonstrations and limited deployments |

| AgiBot | A1 / A2 | Chinese general-purpose humanoid | Commercial pilots |

Tesla's Optimus is often cited as Figure's most direct competitor due to similar ambitions for factory automation and eventual consumer use. During Tesla's Q4 2025 earnings call, CEO Elon Musk acknowledged that no Optimus robots were performing useful factory work despite earlier claims of more than 1,000 deployed units. Optimus Gen 3, with 22-DOF tendon-driven hands, began mass production in January 2026 at Tesla's Fremont, California facility. Boston Dynamics' all-electric Atlas, showcased at CES 2025 and CES 2026, is widely considered the most technically advanced humanoid demonstration to date, with planned deployments at Hyundai and Google DeepMind facilities. Agility Robotics' Digit was reported in early 2026 to be the only humanoid generating sustained revenue from productive commercial work, having moved more than 100,000 totes at GXO warehouses and signed paying contracts with Toyota and Mercado Libre.

Figure AI's $39 billion valuation, achieved with only a limited number of commercial units deployed, reflects investor confidence in the long-term potential of humanoid robotics rather than current revenue. The broader humanoid robotics sector saw significant capital inflows in 2024 and 2025, with multiple companies raising hundreds of millions or billions of dollars and a wave of new entrants from China, including Xpeng, AgiBot, and Unitree Robotics.

In 2025, Figure AI released a series of public demonstrations showing Figure 02 (and later Figure 03) performing household tasks under Helix control. Tasks included unloading and folding laundry, putting away groceries from a cluttered counter into refrigerator and pantry compartments, sorting and stacking dishware, and using cleaning tools. The robot performed these chores with no task-specific programming, relying on Helix's generalist policy and natural-language scene understanding. The demonstrations were used to support Figure's broader argument that the same policy that drives industrial pick-and-place can be retargeted to domestic manipulation.

Figure AI has demonstrated two Figure robots cooperating on a shared task, such as one robot handing an unseen object to another for storage. Helix policies are shared across both robots, and the system handles the implicit hand-off coordination through the unified latent representation produced by the System 2 VLM. This was cited by the company as the first VLA demonstrated to coordinate two humanoid robots simultaneously.

In February 2025, Figure AI released a 90-second teaser of Figure 02 robots rapidly sorting and routing logistics packages under Helix control. The demonstration showed the robot picking parcels of varying shapes and rigidity from a conveyor, orienting their barcodes, and depositing them into outbound bins. A later August 2025 update under the heading "Scaling Helix Logistics" reported quantified improvements:

| Logistics metric | Initial Helix policy | Scaled Helix policy |

|---|---|---|

| Per-package handling time | ~5.0 seconds | 4.05 seconds |

| First-pass barcode orientation accuracy | ~70 percent | ~95 percent |

| First-pass barcode scan success | not separately reported | ~94 percent |

The scaled policy introduced a vision memory module that gave Helix stateful perception over short horizons, allowing the robot to recall recent observations across hand-offs and re-attempts. Figure AI also reported that subtle behaviors had emerged from teleoperated demonstration data, including gentle pats to flatten the surface of plastic polybag mailers before scanning. Industry reporting suggested the logistics work targeted at least one large US delivery operator that had publicly expressed interest in humanoid package handling.

In January 2026, Figure AI released a four-minute continuous video of a Figure 02 robot, controlled by Helix 02, unloading and reloading a full-sized residential dishwasher. The robot navigated across a kitchen, opened the dishwasher, removed clean dishes, placed them in upper and lower cabinets, returned to collect dirty dishes, loaded them, added detergent, and closed the unit with a hip bump. The full sequence comprised 61 consecutive loco-manipulation actions executed with no human resets and no scripted plan, with Helix 02 selecting actions from its onboard policy in real time. Figure AI described the demonstration as the longest-horizon autonomous task executed by any humanoid robot to that point. Reviewers noted human-like flourishes that emerged from the motion-capture-augmented training set, including using a foot to keep the dishwasher door open and using the hip to nudge a drawer shut, behaviors that had not been explicitly programmed.

Figure 02 supports natural-language interaction through onboard speakers and microphones. While the original OpenAI-based speech stack was retired in February 2025, Helix has since absorbed conversational and instruction-following capabilities, allowing operators to issue verbal commands such as "put the dishes in the rack" or "bring me the wrench from the toolbox." The robot uses its onboard VLM to ground these instructions in the visual scene before executing them with the System 1 motor policy.

In November 2025, Robert Gruendel, Figure AI's former principal robotic safety engineer and head of product safety, filed a lawsuit against the company in federal court in the Northern District of California. The case was docketed as Gruendel v. Figure AI, Inc., 5:2025cv10094. Gruendel alleged that he was wrongfully terminated after warning top executives, including CEO Brett Adcock and chief engineer Kyle Edelberg, that Figure's robots "were powerful enough to fracture a human skull."

According to the complaint, internal impact testing on the Figure 02 model generated forces reportedly more than double those required to break an adult skull. Gruendel also alleged that one robot "had already carved a quarter-inch gash into a steel refrigerator door during a malfunction" (some accounts described the gash as three-quarters of an inch deep). The lawsuit further claimed that a product safety plan presented to prospective investors during a funding round was significantly reduced after the round closed, a move Gruendel characterized as potentially fraudulent. The complaint stated that Gruendel was dismissed in September 2025, days after lodging his most direct safety complaints.

A Figure AI spokesperson said Gruendel was "terminated for poor performance," and that his "allegations are falsehoods that Figure will thoroughly discredit in court." The case was pending in early 2026.

The lawsuit drew attention from CNBC, Reuters, Bloomberg, Futurism, The Robot Report, and other outlets, sparking broader discussion about safety standards for humanoid robots intended for use alongside human workers and, eventually, in homes. The episode highlighted that consensus safety frameworks for general-purpose humanoids are still emerging, in contrast with the well-established cage-based safeguards used for traditional industrial robots.

Figure 02 has been described by IEEE Spectrum as a "meaningful step forward" for commercial humanoids, noting the integrated cabling, larger battery, and improved hands. The Robot Report and NVIDIA's company blog highlighted the robot's onboard compute uplift and the strategic significance of running Helix entirely without cloud connectivity. Industry observers including Mike Kalil, Contrary Research, and Sacra characterized Figure 02 as one of the most credible Western contenders in the humanoid race, alongside Tesla's Optimus and Boston Dynamics' Atlas.

Skepticism has also emerged. Fortune in April 2025 published a piece questioning whether Figure AI's BMW partnership was as deep as Brett Adcock's public statements suggested, noting that for stretches of the trial only a single Figure 02 was operating at the plant rather than a fleet, and that the robot was effectively performing one well-bounded pick-and-place task rather than the broader range of operations sometimes implied. The eventual completion of the 11-month pilot, the publication of detailed BMW press materials, and the transition to a follow-on European program at Leipzig partially answered these critiques while leaving open longer-term questions about scalability and economics.

By 2026, industry commentators framed Figure 02 less as a finished product and more as a milestone platform that proved several specific claims: that an electric humanoid could survive a year of industrial duty, that a generalist VLA could be retargeted across factory, logistics, and household tasks without per-task fine-tuning, and that the manufacturing transition from CNC to tooled processes was a credible cost-reduction path. Critics continued to point out that the published commercial customer base remained small and that the safety questions raised in the Gruendel litigation had yet to be resolved. Nonetheless, the retirement of the Spartanburg fleet and the publication of detailed operational metrics gave Figure 02 a level of empirical record uncommon among Western humanoid programs.