ROBOTIS AI Worker

Last reviewed

Apr 26, 2026

Sources

32 citations

Review status

Source-backed

Revision

v4 · 4,576 words

Improve this article

Add missing citations, update stale details, or suggest a clearer explanation.

Last reviewed

Apr 26, 2026

Sources

32 citations

Review status

Source-backed

Revision

v4 · 4,576 words

Add missing citations, update stale details, or suggest a clearer explanation.

| ROBOTIS AI Worker | |

|---|---|

| |

| General information | |

| Manufacturer | ROBOTIS |

| Country of origin | South Korea |

| Year introduced | 2025 |

| Status | In production (made to order) |

| Price | Contact for quote |

| Lead time | ~8 weeks |

| Availability | Available (ships from Korea) |

| Website | ai.robotis.com |

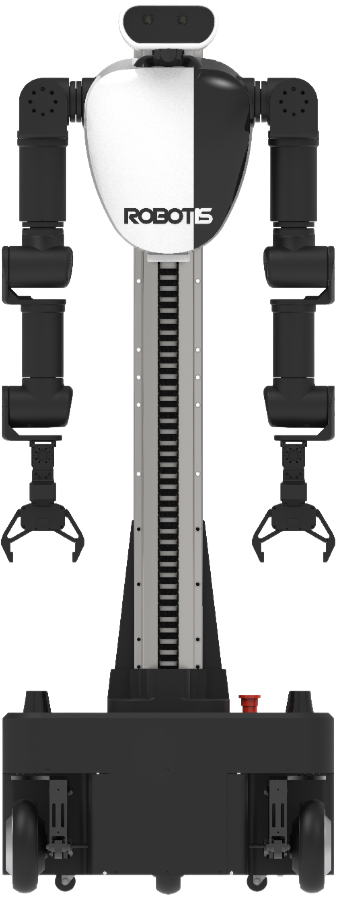

The ROBOTIS AI Worker, formally designated FFW (Freedom From Work), is a semi-humanoid robot platform developed by ROBOTIS Co., Ltd., a South Korean robotics company headquartered in Seoul. Launched in 2025, the AI Worker is designed for industrial automation tasks including assembly, welding, visual inspection, and logistics. Unlike fully bipedal humanoids, the AI Worker combines a human-like upper body (dual 7-degree-of-freedom arms, a 2-DOF head, and dexterous grippers) with a wheeled swerve-drive mobile base, giving it omnidirectional mobility without the complexity and instability of bipedal locomotion.

The platform is built around ROBOTIS's flagship DYNAMIXEL smart actuator ecosystem, specifically the industrial-grade DYNAMIXEL-Y series, and runs on ROS 2 with an open-source software stack licensed under Apache 2.0. An onboard NVIDIA Jetson AGX Orin computer handles perception and real-time control, while a separate workstation with a high-end GPU runs neural network inference for physical AI tasks such as imitation learning and reinforcement learning. ROBOTIS has demonstrated the AI Worker performing convenience store item sorting using NVIDIA's GR00T N1.5 vision-language-action foundation model, achieving approximately 85% success rates in live exhibition conditions with only 10 hours of demonstration data.[1]

The AI Worker represents ROBOTIS's transition from a component and education robotics supplier into a full-system integrator for physical AI, building on over 25 years of experience that includes the DARwIn-OP research humanoid, the THORMANG full-size humanoid, TurtleBot mobile robot platforms, and the GAEMI autonomous delivery robot.

ROBOTIS was founded in 1999 in Seoul, South Korea, by Kim Byoung-soo (also known as Bill Kim) along with two other engineers. The company name is a contraction of "Robot Is...", reflecting the founders' open-ended question: "What is a robot?" From its earliest days, ROBOTIS focused on building modular, intelligent actuator systems rather than complete robots, a strategy that positioned the company as a critical component supplier to the global robotics research and education community.[2]

The company's first and most consequential product line was DYNAMIXEL, an all-in-one smart servo actuator that integrates a DC motor, reduction gearhead, controller, driver, and communication network into a single modular package. DYNAMIXEL actuators quickly became the de facto standard for small-to-medium humanoid robots in academic research, competition robotics, and education. By the mid-2010s, an estimated 95% of teams competing in the RoboCup Humanoid League were using platforms built with DYNAMIXEL actuators.[3]

In 2009, ROBOTIS established a US subsidiary in Corona, California, expanding its commercial reach to North America and Europe. By the mid-2020s, ROBOTIS products were in use in over 50 countries across Asia, Europe, and the Americas.[4]

In 2018, ROBOTIS became the first robotics company in South Korea to be listed on the KOSDAQ stock exchange (ticker: 108490) via a technology special listing, a milestone that underscored the company's technical credibility and commercial viability. CEO Kim Byoung-soo, who holds approximately 27% of the company's shares, has also served as a director of the Korea Association of Robot Industry and the Korea Robotics Society, and as president of the STEAM Education Association.[5]

The DYNAMIXEL product line has evolved through several generations, each targeting different performance tiers:

| Series | Target use | Key features |

|---|---|---|

| DYNAMIXEL AX / MX | Education and hobby robotics | Affordable, lightweight, widely adopted in classroom kits |

| DYNAMIXEL XL / XM / XH | Research platforms and mid-range robots | Higher torque, metal gears, TTL/RS-485 communication |

| DYNAMIXEL-P (PRO) | Professional and industrial robots | High torque (up to 44.2 Nm), used in THORMANG3 |

| DYNAMIXEL-Y | Full-scale industrial robots and humanoids | Frameless motor, cycloidal reduction (DYD), multi-turn absolute encoder (19-bit single-turn / 18-bit multi-turn), optional integrated brake, hollow-shaft design |

The DYNAMIXEL-Y series, introduced alongside the AI Worker, represents ROBOTIS's push into industrial-grade robotics. These actuators feature cycloidal reduction gears (branded as DYNAMIXEL Drive, or DYD) that provide high reduction ratios in a compact form factor while tolerating shock loads better than traditional gear trains. The 19-bit single-turn encoder (524,288 pulses per revolution) and 18-bit multi-turn capability (262,144 revolutions) deliver extremely fine positional control. A backup battery retains multi-turn position information even after complete power loss, eliminating the need for homing procedures after power cycles.[6]

All DYNAMIXEL actuators share a common daisy-chain RS-485 communication protocol, allowing multiple actuators to be networked on a single bus at up to 4 Mbps. This design philosophy, treating actuators as networked nodes rather than isolated motors, is central to ROBOTIS's system architecture and directly informs the AI Worker's control stack.

Before the AI Worker, ROBOTIS developed or co-developed several influential robot platforms:

DARwIn-OP (2010): Short for Dynamic Anthropomorphic Robot with Intelligence, Open Platform, the DARwIn-OP was a miniature humanoid developed in collaboration with Virginia Tech's Robotics and Mechanisms Laboratory (RoMeLa), led by Professor Dennis Hong, along with Purdue University and the University of Pennsylvania. The project was funded by a $1.2 million grant from the National Science Foundation. Standing approximately 45 cm tall with 20 degrees of freedom (each powered by a DYNAMIXEL MX-28T servo), DARwIn-OP became one of the most widely used research humanoid platforms in the world. It won the Kid Size League at RoboCup in 2011, 2012, and 2013. ROBOTIS later released updated versions as the ROBOTIS OP2 and OP3.[7][8]

THORMANG (2015): THORMANG (Tactical Hazardous Operations Robot, Manipulator) was ROBOTIS's first full-size humanoid platform, standing approximately 140 cm tall with 29 degrees of freedom powered by DYNAMIXEL-P (PRO) actuators. THORMANG participated in the DARPA Robotics Challenge (DRC) Finals in Pomona, California in June 2015 as one of the 25 finalist teams. Team SNU (Seoul National University) and a separate Team ROBOTIS both fielded THORMANG-based platforms at the competition. While THORMANG was one of the smallest robots at the DRC Finals, it demonstrated ROBOTIS's ability to build a full-size humanoid system. The experience also exposed limitations: during the surprise task, Team ROBOTIS's THORMANG 2 fell and lost its head while attempting to manipulate electrical plugs.[9][10]

TurtleBot3 (2017): Co-developed with Open Robotics, TurtleBot3 became one of the most popular educational mobile robot platforms in the ROS ecosystem. Available in Burger and Waffle configurations, TurtleBot3 uses DYNAMIXEL actuators and supports SLAM, autonomous navigation, and manipulation (when paired with the OpenMANIPULATOR-X arm). The platform runs on a Raspberry Pi 4 or NVIDIA Jetson Nano and has become a standard teaching tool in university robotics courses worldwide.[11]

OpenMANIPULATOR-X: An open-source, low-cost robotic arm using DYNAMIXEL X-series actuators with 3D-printable structural components. Compatible with TurtleBot3 for mobile manipulation research, OpenMANIPULATOR comes in seven configurations (Chain, SCARA, Link, Planar, Delta, Stewart, and Linear) and supports full ROS integration.[12]

GAEMI (2020s): ROBOTIS's autonomous mobile robot (AMR) line for commercial delivery. The indoor model (GAEMI-0) operates in apartment complexes and office buildings, using an integrated manipulator arm with a 3D camera to press elevator buttons, scan access cards, and knock on doors. The outdoor model (GAEMI-1) handles last-mile parcel and food delivery. Major hotel chains in South Korea and Japan, including Courtyard by Marriott, Novotel, and Ibis Styles, have deployed GAEMI robots for in-hotel delivery services.[13]

The AI Worker emerged from ROBOTIS's recognition that the global manufacturing sector faces acute and worsening labor shortages, particularly for repetitive, physically demanding tasks such as wiring harness assembly, visual inspection, and material handling. Rather than building a general-purpose bipedal humanoid (as pursued by companies like Tesla, Figure AI, and Boston Dynamics), ROBOTIS opted for a semi-humanoid design that prioritizes practical industrial deployment: a human-like upper body for manipulation mounted on a wheeled base for reliable, vibration-free mobility.[14]

This design choice reflects engineering pragmatism. Bipedal locomotion remains an unsolved problem at industrial reliability levels; even the most advanced bipedal humanoids in 2025 and 2026 cannot guarantee the 24/7 uptime required in factory environments. A swerve-drive base eliminates fall risk entirely while providing omnidirectional movement in confined spaces.

The AI Worker is available in two variants:

| Model | Designation | Description |

|---|---|---|

| FFW-SG2 | Mobile variant | Full platform with swerve-drive mobile base, 25 DOF total |

| FFW-BG2 | Stationary variant | Upper body only (no mobile base), 19 DOF total, designed for fixed workstation mounting |

Both variants share the same upper body: dual 7-DOF arms, a 2-DOF head, 1-DOF grippers on each arm, and a 1-DOF vertical lift mechanism. The mobile FFW-SG2 adds a 6-DOF swerve-drive base with three wheels arranged in a triangular configuration.

The FFW designation stands for "Freedom From Work," reflecting ROBOTIS's stated goal of liberating human workers from repetitive, ergonomically harmful, or hazardous industrial tasks. The name positions the AI Worker not as a replacement for human workers but as a tool that handles the tasks humans prefer not to do, allowing human workers to focus on higher-value activities requiring judgment, creativity, and complex decision-making.[15]

| Category | Specification | FFW-SG2 (Mobile) | FFW-BG2 (Stationary) |

|---|---|---|---|

| Dimensions | Overall (W x D x H) | 604 x 602 x 1,623 mm | 604 x 564 x 1,607 mm |

| Weight | Total | 90 kg (198 lb) | 85 kg (187 lb) |

| Materials | Construction | Aluminum, plastic | Aluminum, plastic |

| DOF | Total | 25 | 19 |

| DOF | Arms | 7 per arm (14 total) | 7 per arm (14 total) |

| DOF | Grippers | 1 per gripper (2 total) | 1 per gripper (2 total) |

| DOF | Head | 2 | 2 |

| DOF | Lift | 1 | 1 |

| DOF | Mobile base | 6 | N/A |

| Operating temp | Range | 0 to 40 degrees C | 0 to 40 degrees C |

| Parameter | Value |

|---|---|

| Arm reach | 641 mm (to wrist) + gripper length |

| Nominal payload (single arm) | 3.0 kg |

| Nominal payload (dual arm) | 6.0 kg |

| Peak payload (single arm) | 5.0 kg |

| Peak payload (dual arm) | 10.0 kg |

| Joint range | -pi to +pi radians (full rotation) |

| Encoder resolution | 524,288 pulses/revolution (single-turn) |

| Multi-turn range | 262,144 revolutions |

| Gripper model | RH-P12-RN |

| Gripper type | 1-DOF, two-fingered |

| Gripper weight | 500 g |

| Gripper payload | 5 kg |

| Fingertips | Detachable, interchangeable |

| Joint location | Actuator model | Series |

|---|---|---|

| Arm joints 1-3 | YM080-230-R099-RH | DYNAMIXEL-Y |

| Arm joints 4-6 | YM070-210-R099-RH | DYNAMIXEL-Y |

| Arm joint 7 | PH42-020-S300-R | DYNAMIXEL-P |

| Head joints | DYNAMIXEL-X models | DYNAMIXEL-X |

| Lift mechanism | YM080-230-B001-RH | DYNAMIXEL-Y |

The use of DYNAMIXEL-Y actuators in the primary arm joints (joints 1 through 6) provides the high torque density and precision required for industrial manipulation, while the lighter DYNAMIXEL-P and DYNAMIXEL-X actuators handle lower-load joints in the wrist and head, respectively.

| Sensor | Model | Quantity | FOV | Location |

|---|---|---|---|---|

| Head RGBD camera | Stereolabs ZED Mini | 1 | 102 degrees | Head |

| Hand RGBD cameras | Intel RealSense D405 | 2 | 87 degrees | Wrists/hands |

| LiDAR | N/A | 2 (SG2 only) | N/A | Mobile base |

| IMU | Integrated | 1 | N/A | Body |

The three RGB-D cameras provide complementary perspectives: the head-mounted ZED Mini offers a wide-angle overview for navigation and scene understanding, while the two wrist-mounted RealSense D405 cameras provide close-range depth data critical for precise grasping and manipulation. The dual LiDARs on the mobile base (SG2 model only) support obstacle avoidance and SLAM-based autonomous navigation.

| Parameter | Value |

|---|---|

| Onboard compute | NVIDIA Jetson AGX Orin, 32 GB RAM |

| Communication | Ethernet (via WiFi or direct connection) |

| Baudrate (DYNAMIXEL bus) | 4 Mbps |

| Battery (SG2) | 25V, 80Ah (2,040 Wh) |

| Runtime (SG2) | Up to 6 hours |

| Software stack | ROS 2, Python, C++, Web UI |

The FFW-SG2's swerve drive system uses three independently steered and driven wheels positioned in a triangular arrangement. This configuration provides several advantages over alternative mobile base designs:

| Drive type | Ground contact | Vibration | Payload stability | Omnidirectional |

|---|---|---|---|---|

| Swerve drive (AI Worker) | Full wheel surface | Low | High | Yes |

| Mecanum wheels | Small rollers only | High | Moderate | Yes |

| Omniwheels | Limited roller contact | High | Low | Yes |

| Differential drive | Full wheel surface | Low | High | No (cannot strafe) |

The continuous full-surface wheel contact of the swerve drive produces significantly less vibration during movement compared to mecanum or omniwheel alternatives. This is critical for tasks requiring precise manipulation: vibration transmitted through the base to the arms would degrade positioning accuracy. The swerve drive achieves a maximum velocity of 1.5 m/s while supporting omnidirectional motion (forward, backward, lateral strafing, and rotation in place).[16]

The AI Worker's software stack is built entirely on ROS 2 (Robot Operating System 2), the industry-standard middleware for robotics. The open-source repository on GitHub (ROBOTIS-GIT/ai_worker, Apache 2.0 license) contains the following ROS 2 packages:[17]

| Package | Function |

|---|---|

| ffw | Core framework |

| ffw_bringup | Startup and initialization configuration |

| ffw_description | URDF robot model definitions |

| ffw_joint_trajectory_command_broadcaster | Joint trajectory control interface |

| ffw_joystick_controller | Gamepad teleoperation support |

| ffw_moveit_config | MoveIt 2 motion planning configuration |

| ffw_navigation | ROS 2 Nav2 autonomous navigation |

| ffw_robot_manager | Central robot state management |

| ffw_spring_actuator_controller | Compliant (spring-like) actuator control |

| ffw_swerve_drive_controller | Omnidirectional swerve drive locomotion |

| ffw_teleop | Remote teleoperation capabilities |

The codebase is approximately 51% C++ and 41% Python, with the remainder in CMake, Shell, and C. As of early 2026, the repository has accumulated 593 commits across 26 releases (latest: v1.2.1), with 133 stars and 35 forks on GitHub.[18]

The AI Worker's autonomous navigation is built on the ROS 2 Nav2 stack with two selectable path-tracking controllers:

Regulated Pure Pursuit (RPP): A stable baseline controller that uses lookahead-based path tracking. RPP is well suited for differential-drive-like motion along longer paths.

Model Predictive Path Integral (MPPI) Controller: A more advanced holonomic-capable controller that supports the full omnidirectional motion of the swerve drive, including crab-walking and simultaneous translation and rotation.

Custom Behavior Tree nodes allow the system to automatically select the appropriate controller based on the planned global path. For short, precise positioning tasks, MPPI provides superior omnidirectional control; for longer traversals, RPP offers stable, energy-efficient path following.[19]

ROBOTIS provides simulation models for multiple environments:

| Simulator | Support level |

|---|---|

| MuJoCo | URDF/MJCF models available (via robotis_mujoco_menagerie repository) |

| NVIDIA Isaac Sim | Full integration for sim-to-real transfer |

| Gazebo | ROS 2 native integration |

These simulation tools enable researchers to develop and validate manipulation policies, navigation behaviors, and AI models in simulation before deploying them on the physical robot, following the sim-to-real transfer paradigm that has become standard in modern robotics research.[20]

The AI Worker's primary learning paradigm is imitation learning, specifically based on the Action Chunking with Transformers (ACT) framework. The learning pipeline follows these steps:

ROBOTIS demonstrated a notable integration of the AI Worker with NVIDIA's GR00T N1.5 vision-language-action (VLA) foundation model. The deployment architecture separates the robot controller (running on the Jetson AGX Orin) from the inference server (running on an NVIDIA RTX 5090 workstation), connected via ROS 2 topics and ZMQ sockets. This separation allows the inference compute to scale independently of the robot controller.

Key results from the demonstration:

| Parameter | Value |

|---|---|

| Task | Convenience store item sorting |

| Training data | 10 hours across 800 episodes |

| Training hardware | 8x NVIDIA B200 GPUs |

| Training time | Approximately 20 hours |

| Success rate | Approximately 85% (in live exhibition conditions) |

| Demonstrations needed | As few as 20-40 for basic task acquisition |

The GR00T N1.5 model uses a two-system architecture combining a Vision-Language Model (for understanding the scene and task instructions) with a Diffusion Transformer (for generating precise action trajectories). By fine-tuning this foundation model on task-specific demonstration data, ROBOTIS achieved strong performance with significantly less data than would be required for training from scratch.[22]

ROBOTIS emphasizes robustness through intentional environmental diversity during training data collection. Demonstration data is gathered under deliberately varied lighting conditions, backgrounds, and object arrangements. This approach ensures that trained policies generalize to the unpredictable conditions found in real industrial environments rather than overfitting to a single controlled setup.[23]

The AI Worker targets several categories of industrial automation tasks:

| Application | Description |

|---|---|

| Wiring harness assembly | Manipulating flexible cables and connectors, a task that is notoriously difficult to automate with conventional industrial robots |

| Precision welding | Executing welding operations with millimeter-level accuracy using learned trajectories |

| Visual inspection | Using RGB-D cameras and trained vision models to detect defects on production lines |

| Material handling and logistics | Picking, placing, and sorting items in warehouse and factory environments |

| Convenience store operations | Sorting and stocking items (demonstrated with GR00T N1.5) |

ROBOTIS has described three solution levels addressing different capability requirements:

| Level | Capability |

|---|---|

| Level 1 | Cost-efficient AI manipulation with single-arm operation |

| Level 2 | Dual-arm coordination for more complex assembly tasks |

| Level 3 | Full semi-humanoid two-handed manipulation with imitation learning and free policy training |

Early commercial validation has come through partnerships with CJ Korea Express (logistics) and BGF Retail (convenience store operations) in South Korea.[24]

On November 19, 2024, ROBOTIS announced a research collaboration with the Massachusetts Institute of Technology (MIT) to develop "Physical AI" technology. The partnership is part of an international joint research and development project organized by the Korea Institute for Advancement of Technology (KIAT) under South Korea's Ministry of Trade, Industry and Energy, with total project funding of up to 10 billion Korean won (approximately $7.5 million USD).[25]

The collaboration aims to develop technology that achieves human-level manipulation capabilities, requiring AI that can actively sense and respond to real-world environmental changes, control reflexive reactions, and learn from each situation. The Physical AI technology is expected to be applied first to ROBOTIS's OpenMANIPULATOR-Y (OM-Y) collaborative robot arm, enabling it to perform dexterous manipulation tasks beyond the capabilities of current gripper-based systems.

CEO Kim Byoung-soo stated that Physical AI represents "the biggest topic in the robotics industry" and expressed confidence that combining "ROBOTIS's experience and technological prowess" with "the distinguished scholars of MIT" would accelerate development of next-generation robot intelligence.[26]

ROBOTIS's influence on competitive robotics, particularly the annual RoboCup tournament, has been substantial and long-running. The company's impact spans multiple competition categories:

The DARwIn-OP platform, powered by 20 DYNAMIXEL MX-28T servos, won the Kid Size League at RoboCup three consecutive years (2011, 2012, 2013). Team DARwIn, fielded by Virginia Tech's RoMeLa lab, defeated 24 international teams at RoboCup 2011 in Istanbul, Turkey. The DARwIn-OP's success led to widespread commercial adoption of the platform; by 2014, over 50% of all Kid Size League entries used the DARwIn-OP base platform.[27]

Beyond the DARwIn-OP platform itself, DYNAMIXEL actuators became the dominant actuator choice across all RoboCup humanoid categories. An estimated 95% of teams in the RoboCup Humanoid League use robotics platforms based on DYNAMIXEL actuators. Teams that do not use complete ROBOTIS platforms frequently build custom robot designs around DYNAMIXEL servos, taking advantage of the actuators' networked communication, compact form factor, and integrated feedback capabilities.[28]

ROBOTIS's participation in the 2015 DARPA Robotics Challenge Finals with the THORMANG platform demonstrated ambitions beyond the education and research market. Although neither Team ROBOTIS nor Team SNU (Seoul National University, which also used a THORMANG-based robot) won the competition (South Korea's Team KAIST won with their DRC-HUBO robot), the experience provided ROBOTIS with invaluable lessons in full-size humanoid operation in unstructured environments. These lessons directly informed the design decisions behind the AI Worker, including the choice of a wheeled base over bipedal locomotion for industrial reliability.[29]

The AI Worker occupies a distinctive niche in the humanoid and semi-humanoid robot market. While most high-profile humanoid projects pursue bipedal locomotion, the AI Worker's wheeled semi-humanoid design places it in a category alongside mobile manipulator platforms:

| Company | Robot | Form factor | Focus area | Key differentiator |

|---|---|---|---|---|

| ROBOTIS | AI Worker | Wheeled semi-humanoid | Industrial physical AI | DYNAMIXEL ecosystem; open-source ROS 2; imitation learning |

| Tesla | Optimus | Bipedal humanoid | General-purpose factory work | Projected low cost (~$30K); Tesla manufacturing scale |

| Figure AI | Figure 03 | Bipedal humanoid | General-purpose | $39B valuation; OpenAI partnership |

| Agility Robotics | Digit | Bipedal humanoid | Warehouse logistics | Most commercially deployed; 100K+ totes moved |

| Boston Dynamics | Atlas | Bipedal humanoid | Research/industrial | Hyundai backing; decades of locomotion research |

| Apptronik | Apollo | Bipedal humanoid | General-purpose industrial | NASA heritage; Mercedes-Benz partnership |

| Sanctuary AI | Phoenix | Bipedal humanoid | General-purpose | Dexterous hands; Carbon AI system |

The AI Worker's competitive advantages lie in its open-source software ecosystem, the mature and widely adopted DYNAMIXEL actuator platform, the reliability of its wheeled base for industrial environments, and its established physical AI pipeline integrating teleoperation, imitation learning, and foundation model inference. Its primary limitation compared to bipedal humanoids is the inability to navigate stairs, step over obstacles, or operate in environments designed exclusively for bipedal access.

ROBOTIS has made the AI Worker one of the most comprehensively open-sourced humanoid platforms available:

| Resource | Location |

|---|---|

| Core ROS 2 packages | GitHub: ROBOTIS-GIT/ai_worker (Apache 2.0) |

| Physical AI tools | GitHub: ROBOTIS-GIT/physical_ai_tools |

| Reinforcement learning tutorials | GitHub: ROBOTIS-GIT/robotis_lab (Apache 2.0) |

| MuJoCo simulation models | GitHub: ROBOTIS-GIT/robotis_mujoco_menagerie |

| Pre-trained models and datasets | HuggingFace: huggingface.co/ROBOTIS |

| Docker images | Official Docker Hub images |

| Documentation | ai.robotis.com |

| Video tutorials | ROBOTIS YouTube channel |

This open-source strategy mirrors the approach that made DARwIn-OP and TurtleBot3 successful: by lowering barriers to adoption and enabling community contribution, ROBOTIS builds ecosystem lock-in through developer familiarity rather than proprietary restrictions. Researchers and developers who learn ROS 2 robotics on TurtleBot3 with DYNAMIXEL actuators can transfer their skills directly to the AI Worker platform.[30]

ROBOTIS has announced several planned developments for 2026 and beyond:

The AI Worker FFW-SG2 (mobile variant) is available for purchase through ROBOTIS's US subsidiary. Due to the made-to-order nature of the product, standard lead time is approximately 8 weeks. Units ship directly from ROBOTIS's manufacturing facility in South Korea, with import tariffs included in the quoted price. Pricing is not publicly listed; prospective buyers must contact ROBOTIS directly (america@robotis.com or 949-377-0377) for a quote.[32]

Target markets include manufacturing, logistics, and research and development. The platform's open-source software stack and comprehensive documentation make it particularly attractive to academic research groups and corporate R&D labs working on physical AI, computer vision, manipulation planning, and robot learning.